The ultimate guide to personal data, personal information, personally identifiable information and sensitive information

Explore the guide

In the case of a data breach, organizations that have mishandled or improperly stored their customers' personal information can find themselves in a precarious position.

If they’ve failed to identify the personal information they hold regarding their customers, it’ll be much harder to properly assess the damage done. And if they haven't had data minimization strategies in place, more current and former customers can be affected.

When organizations have poor data management practices, their customers' data privacy is often an afterthought. Instead, organizations should focus on adopting a proactive data security framework, where privacy is built into systems, technologies, policies, and processes, an approach often referred to as Privacy by Design.

A key part of this approach is to understand the sensitive data you have, so a level of knowledge about the types of data and the terminology used is a must. This article will help build that baseline knowledge, by outlining what the terms mean both in general and for specific legislation.

Before we do that, let's take a quick look at Privacy by Design.

Benefits of Privacy by Design

Implementing a Privacy by Design approach has many benefits, including enabling early identification and remediation of potential privacy risks, which is a far better outcome than learning about these risks after they’ve been exploited. By adopting this approach, you can feel more confident in meeting your privacy compliance requirements.

Conducting an ongoing data inventory, with established processes for data classification and data minimization, is an essential part of a proactive Privacy by Design approach. This is where monitoring Personally Identifiable Information (PII) across your systems can help you locate and classify data with PII and ensure it is not kept longer than required.

Now that we understand the importance of Privacy by Design and data inventories, we need to understand the terminology.

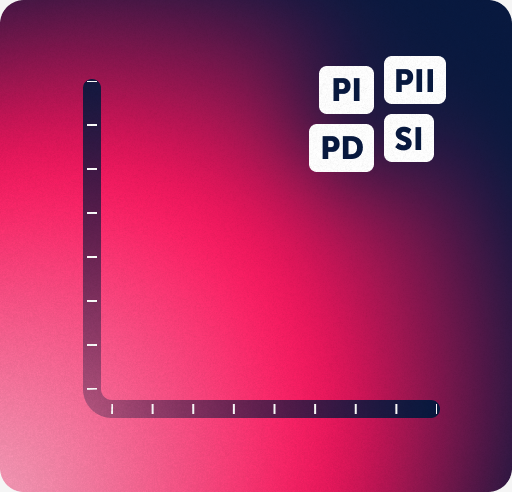

Let's first take a look at some specific privacy terms used that are often used interchangeably: Personal Data, Personal Information, Personally Identifiable Information and Sensitive information.

There’s a lot of overlap in the terms across jurisdictions, but they all cover common ground. Let's start by looking at the high-level differences.

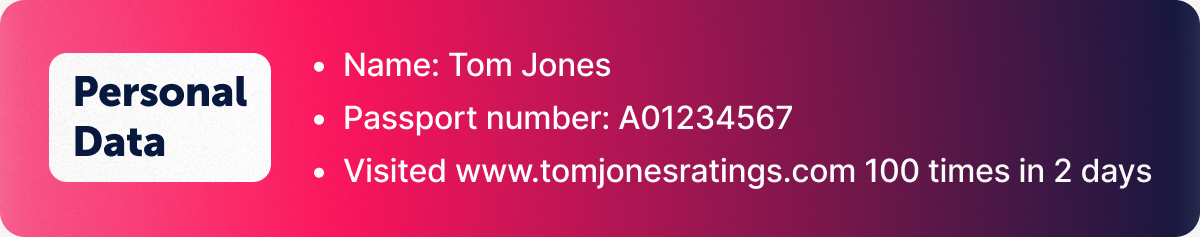

Personal Data (PD)

When talking about Personal Data, many privacy pros are referring to the processing of information as related to the EU’s General Data Protection Regulation (GDPR). ‘Personal Data’ is broad in scope and refers to any information that is “clearly about a particular person.”

GDPR Article 4, gives the following definition for “personal data”:

'Personal data’ means any information relating to an identified or identifiable natural person (‘data subject’); an identifiable natural person is one who can be identified, directly or indirectly, in particular by reference to an identifier such as a name, an identification number, location data, an online identifier or to one or more factors specific to the physical, physiological, genetic, mental, economic, cultural or social identity of that natural person.

GDPR sets out special categories of personal data that includes:

- Race

- Ethnicity

- Political views

- Religion, spiritual or philosophical beliefs

- Biometric data for ID purposes

- Health data

- Sex life data

- Sexual orientation

- Genetic data

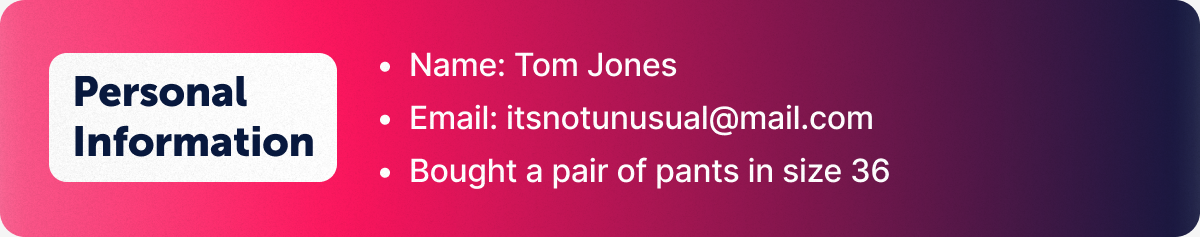

Personal Information (PI)

Many jurisdictions refer to it as Personal Information rather than Personal Data, including the Australian Privacy Act and the California Consumer Privacy Act (CCPA). Although PI and PD are more alike than not, there are subtle differences between these two, depending on jurisdiction. For example, the GDPR specifies that online identifiers like IP addresses and cookie identifiers are personal data. The Australian Privacy Act does not specifically mention IP addresses and cookie identifiers in personal information.

The Australian Privacy Act defines 'personal information' as:

Information or an opinion about an identified individual, or an individual who is reasonably identifiable:

- whether the information or opinion is true or not; and

- whether the information or opinion is recorded in a material form or not.

The term ‘personal information’ in the Australian Privacy Act context encompasses a broad range of information and the Act does specify some personally identifiable information examples:

- Sensitive information – (includes information or opinion about an individual’s racial or ethnic origin, political opinion, religious beliefs, sexual orientation or criminal record, provided the information or opinion otherwise meets the definition of personal information.

- Health information – which is also ‘sensitive information’

- Credit information

- Employee record information (subject to exemptions), and

- Tax file number information

The more recent California Consumer Privacy Act (CCPA) maintains a broad definition, defining personal information as a broad category of all kinds of data:

“Information that identifies, relates to, describes, is capable of being associated with, or could reasonably be linked, directly or indirectly, with a particular consumer or household.”

CCPA includes the following categories of personal information:

- Identifiers: Name, alias, postal address, unique personal identifier, online identifier, Internet Protocol (IP) address, email address, account name, social security number, driver’s license number, passport number, or other similar identifiers

- Customer records information: Name, signature, social security number, physical characteristics or description, address, telephone number, passport number, driver’s license or state identification card number, insurance policy number, education, employment, employment history, bank account number, credit or debit card number, other financial information, medical information, health insurance information

- Characteristics of protected classifications under California or federal law: Race, religion, sexual orientation, gender identity, gender expression, age

- Commercial information: Records of personal property, products or services purchased, obtained, or considered, or other purchasing or consuming histories or tendencies

- Biometric information: Hair color, eye color, fingerprints, height, retina scans, facial recognition, voice, and other biometric data

- Internet or other electronic network activity information: Browsing history, search history, and information regarding a consumer’s interaction with an Internet website, application, or advertisement

- Geolocation data

- Audio, electronic, visual, thermal, olfactory, or similar information

- Professional or employment-related information

- Education information: Information that is not “publicly available personally identifiable information” as defined in the California Family Educational Rights and Privacy Act (20 U.S.C. section 1232g, 34 C.F.R. Part 99)

- Inferences: Inferences that could be used to create a profile reflecting a consumer’s Preferences, Characteristics, abilities to name a few

For a full review of the privacy legislation landscape across the United States, check out this informative infographic from the International Association of Privacy Professionals (IAPP).

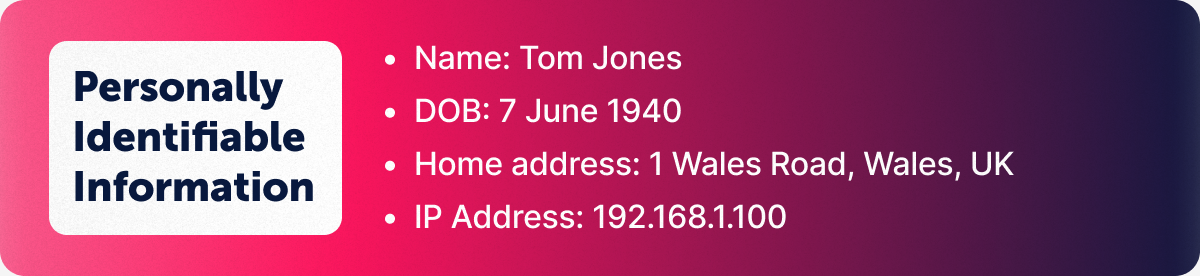

Personally Identifiable Information (PII)

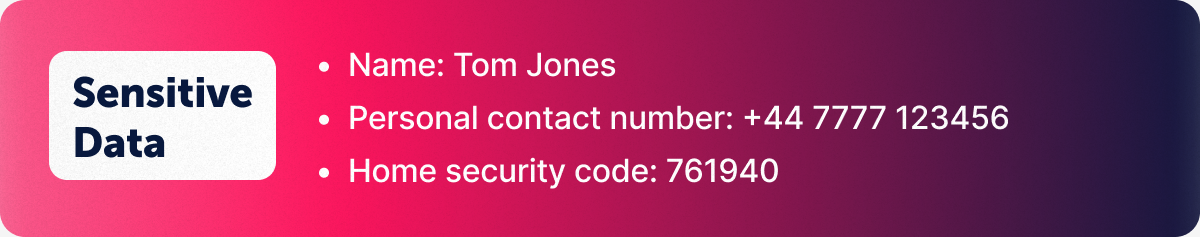

Personally Identifiable Information (PII) terminology is used by both governments and corporations, and generally speaking, it is information that can be used on its own or combined with other information to identify, contact, or locate a single person, or to identify an individual in context.

A term more commonly used in the United States, the US Office of Privacy and Open Government, defines PII data as:

“Information which can be used to distinguish or trace an individual’s identity, such as their name, social security number, biometric records, etc. alone, or when combined with other personal or identifying information which is linked or linkable to a specific individual, such as date and place of birth, mother’s maiden name, etc.”

The National Institute of Standards and Technology’s (NIST) Guide to Protecting the Confidentiality of Personally Identifiable Information (PII), lists the following PII examples:

- Name, such as full name, maiden name, mother‘s maiden name, or alias

- Personal identification number, such as social security number (SSN), passport number, driver license number, taxpayer identification number, patient identification number, and financial account or credit card number

- Address information, such as street address or email address

- Asset information, such as Internet Protocol (IP) or Media Access Control (MAC) address or other host-specific persistent static identifier that consistently links to a particular person or small, well-defined group of people

- Telephone numbers, including mobile, business, and personal numbers

- Personal characteristics, including photographic image (especially of face or other distinguishing characteristic), x-rays, fingerprints, or other biometric image or template data (e.g., retina scan, voice signature, facial geometry)

- Information identifying personally owned property, such as vehicle registration number or title number and related information

- Information about an individual that is linked or linkable to one of the above (e.g., date of birth, place of birth, race, religion, weight, activities, geographical indicators, employment information, medical information, education information, financial information)

The table below lists some specific examples of personal information that can be scanned for in the RecordPoint platform, but keep in mind, this isn’t an exhaustive list.

Across all jurisdictions, it’s key to note that PD, PI and PII can range from sensitive and confidential information, to information that is widely publicly available.

Sensitive Personal Information and sensitive data

Sensitive information is a subset of Personal Information. Most jurisdictions' definitions of sensitive information align, but they each have slight differences in language.

The GDPR classifies certain types of information as sensitive data, which is subject to specifically defined processing conditions. Sensitive data includes information that could cause harm to an individual if used for identification and malicious purposes.

What is sensitive data under GDPR?

- Racial or ethnic origin

- Political opinions

- Religious beliefs

- Genetic data

- Sexual orientation or activities

The Australia Privacy Act

This regulation defines Sensitive Personal Information to mean information or an opinion about an individual’s:

- Racial or ethnic origin

- Political opinions

- Membership of a political association

- Religious beliefs or affiliations

- Philosophical beliefs

- Membership of a professional or trade association

- Membership of a trade union

- Sexual preferences or practices

- Criminal record

The California Privacy Rights Act (CPRA)

Often referred to as CCPA 2.0 and an amendment of the CCPA, this regulation defines Sensitive Personal Information to include:

- Government identifiers, such as Social Security Numbers and drivers license numbers

- Account information (e.g., financial account or credit card numbers in combination with any required access codes or passwords),

- Precise geolocation information

- Racial or ethnic origin, religious or philosophical beliefs, or union membership

- Content of postal mail, email, and text messages, unless the business is the intended recipient of the subject communications

- Genetic data

- Biometric information that uniquely identifies a consumer or information concerning a consumer's health, sex life, or sexual orientation

Understanding all these terms, and how they may intersect or differ, is a key part of a Privacy by Design approach. Organizations who embed privacy into their systems and processes will have a strategic competitive advantage, as well as generating trust among their customer base.

How can RecordPoint help?

With RecordPoint, you can instantly identify and protect your customer’s data, whether its PD, PI, PII or Sensitive Data. With manage-in-place capabilities and easy, flexible integrations, RecordPoint’s platform makes it easy to implement a single data governance strategy that helps mitigate risk and enable regulatory compliance.